AI Is Repricing Labor And the Legal System Is the First Real Constraint

For the past three years, the AI conversation has centered on raw capability: How intelligent will models become? How quickly will they displace knowledge workers? How massive must the data center buildout be?

In 2026, those are the wrong questions.

The right one is already being litigated in courtrooms, negotiated in union halls, and codified in regulatory agencies across three continents: What will institutions actually permit AI to do?

The widening gap between AI’s technical potential and its legal permissibility is where the next cycle of risk, return and capital allocation will be decided. This is no longer a pure software story, it’s a systems story. Most of the market still hasn’t adjusted.

The Signal That Most People Missed

On May 1st, Hangzhou Intermediate People’s Court in China delivered a ruling every CFO, fund manager, and policy analyst should study.

A quality assurance supervisor named Zhou reviewed large language model outputs at a tech firm. His role: ensuring the AI avoided illegal, harmful, or inaccurate content. Salary: ~300,000 yuan ($44,000).

The company automated his job, offered him a 40% pay cut in a lesser role, and fired him when he refused, citing “AI disruption” and reduced staffing needs.

Zhou won at arbitration, then at district court, then on appeal. The court ruled that AI adoption was a voluntary business choice to boost competitiveness. Shifting the risks of technological change onto the employee did not qualify as a “major change in objective circumstances” under Chinese labor law.

Translation: AI adoption is the company’s problem, not the worker’s.

This isn’t isolated.

Why This Isn’t Isolated

- European Union: The AI Act classifies workplace systems (recruitment, performance scoring, promotions, terminations) as high-risk. From August 2026, employers must conduct risk assessments, bias testing, documentation, human oversight, and worker notifications. Fines are boardroom material.

- United States: Unions and litigation drive constraints. Recent contracts for writers, actors, dockworkers, nurses, and service workers include explicit AI guardrails. Algorithmic hiring/firing class actions are advancing.

- China: The Hangzhou case, timed near International Workers’ Day, signals Beijing’s stance: aggressive AI adoption yes, but not at the cost of social stability.

Three very different systems converge on one principle: AI adoption is a corporate decision. Its costs belong on the balance sheet, not the employee’s livelihood.

That principle will shape corporate strategy for the rest of the decade.

The Economics Don’t Match the Pitch Decks

Consensus narrative:

AI replaces worker → costs → zero → margins explode → multiples expand.

Reality:

AI deployed → legal review → works council approval → severance → retraining → ongoing monitoring → delayed savings → partial margin gains (maybe).

Reports like Goldman Sachs (300 million FTE jobs exposed) and McKinsey (25% of work hours automatable) remain valid. Model inference costs have plummeted. But the binding constraint has shifted from compute to compliance.

The paradox of 2026–2027: AI gets dramatically cheaper while AI deployment gets more expensive — simultaneously.

Companies betting 2027 earnings on rapid headcount cuts are walking into a court, regulator, or strike.

The Energy Wall Is Closer Than You Think

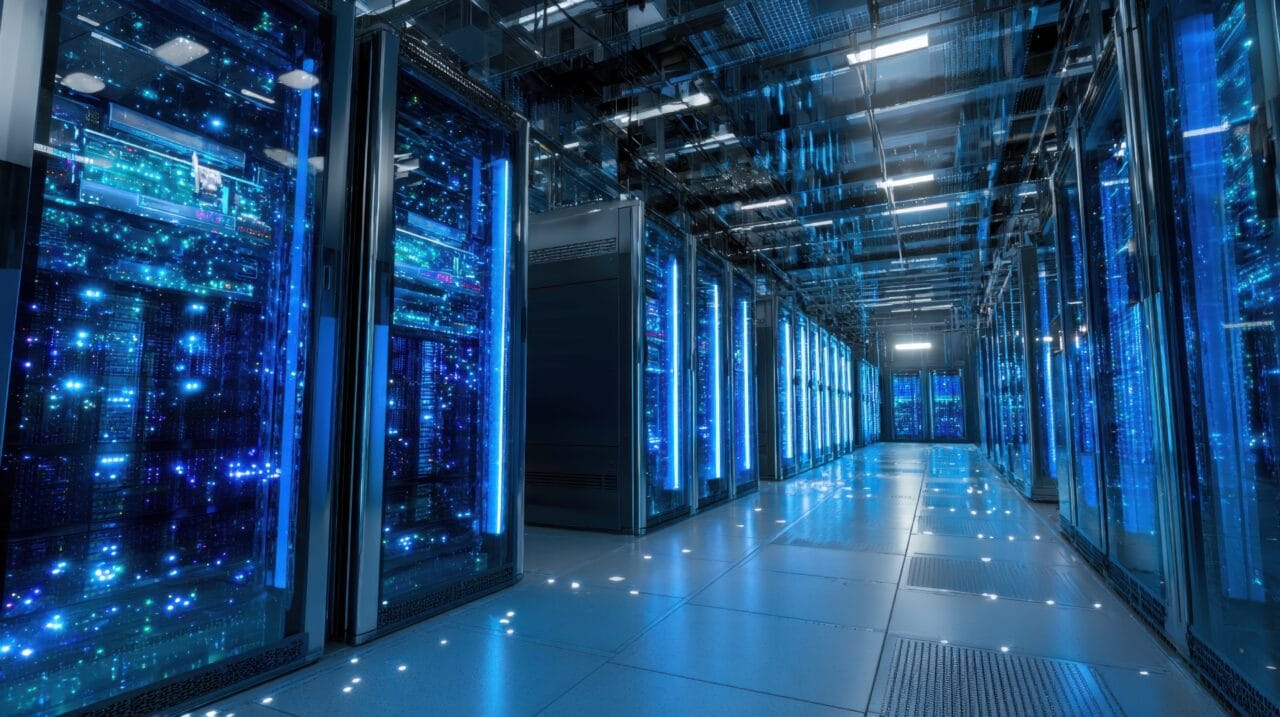

Even if legal barriers vanished overnight, energy remains the hard limit.

McKinsey projects ~$7 trillion in global data center capex by 2030 ($5.2T AI-specific). Annual AI infrastructure spend could triple to $1.5 trillion. Data centers could claim 9% of global electricity. U.S. grid queues stretch years; congestion costs have surged.

The bottleneck isn’t intelligence or even law, it’s electrons. And building reliable power takes 7–10 years and billions per project.

Where Capital Is Repricing

Attractive flows:

- Compute + energy stack (hyperscalers, chip designers, data center REITs, power generation, nuclear, grid tech).

- Compliance & governance layer (AI audit tools, employment legal tech, transition platforms).

- Augmentation tools (copilots and workflows that boost existing workers — legally safer, faster ROI).

- Energy infrastructure enablers (batteries, transmission, optimization).

Higher caution:

- Pure labor-arbitrage models (offshore BPO, low-skill digital labor platforms).

- Earnings narratives built on aggressive 2027–2028 headcount reduction.

- Jurisdictions lacking regulatory clarity.

How This Plays Out

Near-term (1–3 years): Precedents multiply. EU high-risk rules activate August 2026 with first fines in 2027. U.S. contracts embed more AI limits. Deployment tilts heavily toward augmentation. Employment-AI litigation becomes its own asset class.

Mid-term (3–7 years): Standardized “AI-compliant” enterprise frameworks emerge. Energy infrastructure becomes the dominant constraint. First uneven productivity gains appear in earnings.

Long-term (7–10+ years): Legal systems adapt. New labor categories form. Displacement accelerates within managed structures. Winners treat law and energy as core strategy inputs from day one.

The Closing Frame

Markets still price AI as a clean software revolution. It is actually a collision between exponential technology and linear institutions and institutions are holding the line longer than the hype suggests.

The true constraint is no longer intelligence. It is law, energy, and social stability.

Capital will reward those who deploy AI inside real-world constraints, without lawsuits, fines, strikes, or blackouts.

Watch the courts. Watch the grid. Watch the contracts.

The AI story is being written there, not in the demo videos.